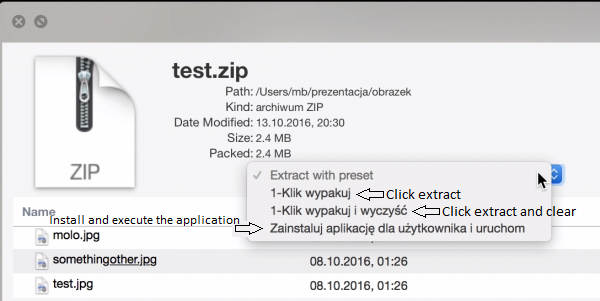

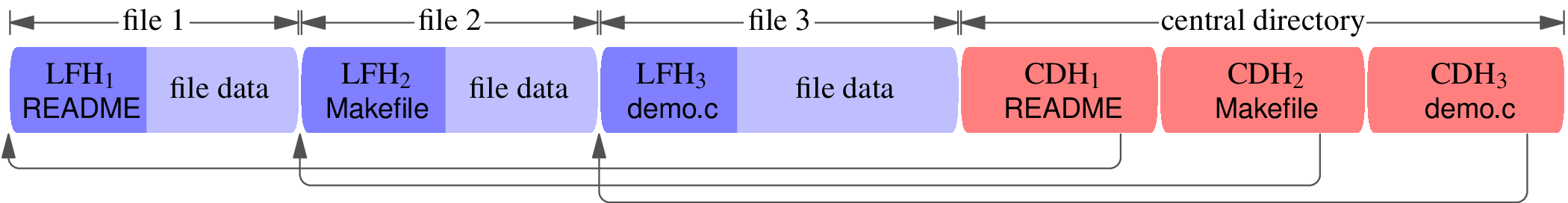

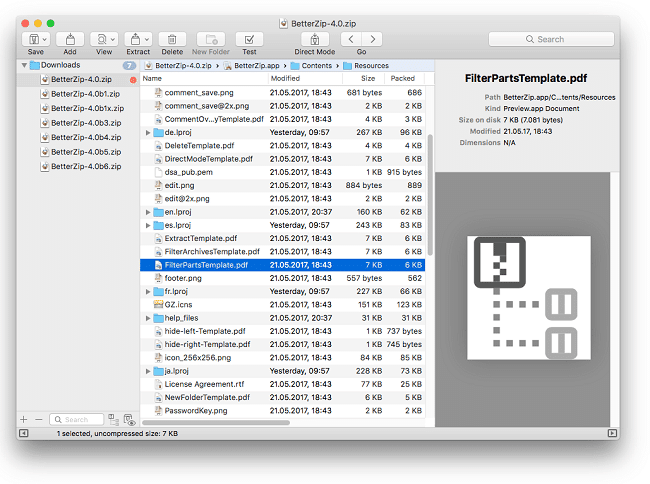

Local file headers are treated as both code and data:Īnd as part of a DEFLATE stream (file data). LEN is the number of data bytes in the block. The rest of the block consists of the following information: Thus making it a valid part of the DEFLATE stream.Įach block of compressed data begins with 3 header bitsīFINAL is set if and only if this is the last block of the dataīTYPE specifies how the data are compressed, as follows:ġ0 - compressed with dynamic Huffman codesĪny bits of input up to the next byte boundary are ignored. Solution: add a prefix that wraps the local file header To prevent them from being interpreted as DEFLATE data. (See overlap.zip, generated by the source code.) You will quickly think of many troubling questions. The zip file format specification is calledįor a specification, it's not very precise. The headers in the central directory and in theįiles contain (redundant) metadata such as the filename. Which is like a table of contents that points backwards Goal: high compression ratio without using recursion.Ī zip file consists of a central directory, Tries to work around the DEFLATE limitation

$ dd if=/dev/zero bs=1000000 count=1000 | gzip > test.gzĬompressed uncompressed ratio uncompressed_name The only universally supported compression algorithm isīut DEFLATE's maximum compression ratio is 1032. Haven't I heard this before? Limits of the DEFLATE compression algorithm $ time unzip -p zblg.zip | dd bs=32M of=/dev/null status=progress. $ wc -bytes zbsm.zip zblg.zip zbxl.zip 42374 zbsm.zip Source code / short demo source code link Next they run the intermediate data through a single " entropy coder" (arithmetic coding, or Huffman coding, or asymmetric numeral system coding) that's pretty much the same for every kind of data.A better zip bomb (dc303 talk) A better zip bomb In general, most data compression software is made up of these 2 parts.įirst they run the original data through a " transformation" or multiple transformations, also called "decorrelators", typically highly tuned to the particular kind of redundancy in the particular kind of data being compressed (JPEG's DCT transform, MPEG's motion-compensation, etc.) or tuned to the limitations of human perception (MP3's auditory masking, etc.). So already this 2-step process creates smaller files than either algorithm alone.Īs a bonus, typically the copy items are also Huffman compressed for more savings in storage space. Often those "copy items" are already much shorter than the original substring would have been if we had skipped the LZ77 step and simply Huffman compressed the original file.Īnd Huffman does just as well compressing the literal letters in the intermediate sequence as it would have done compressing those same letters in the original file. Then Huffman further compresses that intermediate sequence. LZ77 compresses an original file into an intermediate sequence of literal letters and "copy items". Letter-oriented Huffman is good at detecting and compressing files using the letter frequencies alone, but it cannot detect correlations between consecutive letters (common words and syllables). LZ77 is good at detecting and compressing these kinds of common words and syllables that occur far more often than you might guess from the letter frequencies alone. So what is the most common pair? You might guess "ee", "et", or "te" - nope, it's "th". In English, the 2 most common letters are (usually) 'e' and then 't'.

(Unfortunately, most data compression algorithms cannot be combined like this). use both Huffman and LZ77?īecause these 2 algorithms detect and remove 2 different kinds of redundancy in common to many data files (video game levels, English and other natural-language text, etc.), and they can be combined to get better net compression than either one alone.

Why both Huffman and LZ77?īut why does DEFLATE, Zstandard, LZHAM, LZHUF, LZH, etc. It's possible that the developers are using DEFLATE (or some similar algorithm), simply to be able to re-use tested and debugged software rather than writing something new from scratch (and taking who-knows-how-long to test and fix all the quirky edge cases).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed